We embrace that learning is much more than courses and assessments (though courses and assessments are part of it!). Learning happens across a wide variety of experiences, many of them social and experiential.

As we have built our Learning Experience Platform (LXP), we’ve re-imagined e-learning. Along the way, we tackled some interesting challenges. They have been fun to overcome.

This post outlines just a few of the challenges we faced building a new kind of LXP. I focus on the challenges of disparate and competing online learning systems, standards, products, initiatives, and protocols; the ins and outs of designing competency-based learning technology; and the complexities of delivering diverse forms of learning content.

Though a bit technical, this post should be useful to people in educational technology (builders, leaders, investors, etc.), online learning, and software development.

Challenge A: Disparate/Competing Solutions

Software developers and learning professionals alike will relate to this one: There are too many disparate and competing systems, standards, products, initiatives, and protocols in the e-learning space. An LXP could include all of these (and more):

- Learning Management System (LMS)

- Learning Record Store (LRS, sometimes sold as two pieces: a Transactional LRS and a second, “Authoritative” LRS)

- Enterprise Learner Record (ELR)

- Sharable Competency Definitions (SCD) and a Competency Framework Registry

- Experiences Index or Enterprise Course Catalog (or both)

- Learning Event Management (LEM) System

- Content Management System (CMS)

- Course authoring software

- Oh, and of course, you’ll need a cloud architecture to run it all, with a Relational Database Management System (RDBMS), Role-Based Access Control (RBAC), cache, security, uptime, and availability guarantees

The truth is, an LXP is all these things (and more). Building an LXP by tying together off-the-shelf products in each of these categories is tempting. After all, who wouldn’t want to save money by not building each piece from scratch?

But by the time you’ve gotten all these disparate systems to speak to each other well enough to accomplish the core objective, you’ve inevitably cut scope and sacrificed polish, compromised on your desired feature set, and still burned up your entire development budget. Worse, you’re left with an inflexible system due to the delicate and complex nature of all the interconnections and dependencies between systems.

Perhaps the most destructive outcome is that your data is spread across all these systems and must be kept in sync. How many API calls does it take to answer the question, “Where did this learner leave off, and where should they go next?”

By building Bedrock from the ground up using open-source and commercial primitives such as PostgreSQL and Microsoft Azure Container Apps, we are able to build exactly what we need, using the right tools for the job. PostgreSQL is our RDBMS. It is also our raw time-series xAPI LRS, the graph datastore for our competency model, a message queue, and a cache for our game matchmaking system.

Our LMS, LRS, and ELR are in one monolithic codebase that is organized into module libraries that can be composed together as apps or scheduled jobs as needed. Our schema is defined in code and versioned. If we need to export data for a partner or integrate into a customer’s system, we do a one-time mapping from their competency model to ours, and we can provide all this, from one place.

The lesson? Don’t be hemmed in by the ecosystem. Stringing together lots of “easy” solutions imposes harsh limits on the customizability, extensibility, and overall performance of a system.

While it can be advantageous to build with existing ecosystem tools, make sure you’re adding third-party dependencies at the right level of abstraction. It doesn’t make sense for us to develop our own RDBMS, so we use open source. We use xAPI (more on this below) for our time-series event schema because it serves our needs while being an industry standard. But our core web app? That’s custom all the way. The look and feel, the performance, the feature set — we control it completely.

Challenge B: Competency-Based, Self-Directed Learning

Taking a competency-based approach to learning enables rich features that make Bedrock stand apart from other solutions. The list of reasons for adopting a competency-based approach is long and out of scope for this blog post (If you need justification, look no further than the U.S. government’s own list of requirements and directives, the directives being given by the Office of Personnel Management, and the Advanced Distributed Learning initiative inside the Defense Department). Here, I focus on how a competency model enables and enriches the Bedrock platform specifically: by enabling rich recommendations and remediations, self-directed mastery learning, and AI-based judgments about mastery levels.

For our competency model to enable all these features, we knew we needed to connect all types of activities, across all disciplines holistically. To accomplish this, we chose to implement our competency framework as a graph data structure. Though there are dedicated graph database products, our graph is implemented in PostgreSQL, which has great tools for traversing these kinds of relationships without sacrificing the awesome power of a RDBMS for application development.

The competency framework graph underpins and connects everything in Bedrock. All activities (courses, games, intel, discussions) are tagged with one or more competencies. The competencies are organized in a hierarchical tree structure according to domain, subdomain, and subject. This structure alone is already a powerful tool for building advanced features like recommendation engines (finding “related content” is a trivial tree traversal operation). Lots of advanced systems can be built on these explicit relationships. But the implicit relationships that are formed in this graph are more interesting.

While the explicit tree structure makes finding related learning content easy, it lacks the ability to surface emergent and implied linkages. For example, an introductory course on computer networking is in the “Computer Science” domain, but it’s also useful to learners studying Cybersecurity. It could even be mentioned in a broad “Introduction to Business Intelligence” course. As our content catalog grows — both with Bedrock-authored activities and community discussions — the number of linkages grows. That “Networking” competency is now linked to the “Computer Science” domain, the Cybersecurity subdomain, and the “Intro to Business Intelligence” course. Activities, competencies, domains, subjects, grades, learners, discussions, and games are all inherently linked by traversing these graph connections that are automatically formed when content is authored.

Are you seeing it yet? Suddenly, we can answer questions that traverse this implicit data model. Things that modern AI-enabled systems are expected to deliver, like:

- What other subjects often interest learners that find this intelligence brief useful?

- What games might be useful to recommend when a learner is stuck on this specific course module?

- What competencies do our biggest gamers excel at?

- Who are the trusted voices in the community on the subject of cyber security?

- Are there any intelligence feeds that this user might want to follow, based on their configured mastery goals?

One of the main challenges of self-directed learning is helping the user plot the route toward mastery. Different learners will need to take different routes through the learning content catalog depending on a variety of factors such as their previous education and professional experience. AI systems that we train on this web of interconnected learner data can find patterns and anomalies and give the right nudges, at the right time, to achieve better learning outcomes while also increasing engagement and enjoyment of the learning experience.

An Aside: Learning Outcomes Over Engagement

Many apps and social media platforms treat user engagement as the most important metric (and with good reason; engagement — any kind of engagement — is a leading indicator of profitability for these free, ad-supported websites).

Bedrock is different: Our customers pay for licenses/seats in exchange for great learning outcomes. Not engagement, not “virality.” We measure mastery of knowledge.

While this distinction is important, we also recognize that engagement is a crucial ingredient in effective learning — but it must be meaningful engagement, unlike the clickbait, outrage, and false information that qualifies as engagement on social media. Learners must feel motivated and connected to the content, and they should enjoy the experience of learning. We know that more time spent learning usually correlates to better learning outcomes, especially when learners have control over where they choose to spend their time.

There are several ways we promote learning outcomes that are compatible with engagement. First is how we grant Experience Points (XP), our measure of engagement on Bedrock. XP can be earned for a variety of tasks: earning an achievement, finishing a course, answering someone else’s question on the forums, playing a game, etc.

All XP grants are tied directly into our competency framework. Not only do we have a measure of the total engagement of a user, but we also have that score broken down to the competency level and deeply linked through the graph database of our competency framework to all other activities, subjects, and so on. When our AI is calculating a learner’s mastery of a subject, it can weigh related XP grants, and may even weight certain XP grant types higher in certain subject areas, if those types of grants are typically more often correlated with mastery.

All gamification elements on Bedrock — achievements, XP, badges, and actual gameplay sessions — are embedded within the fabric of the competency framework graph and can give meaningful additional evidence to the learner record (and soon, we have a dedicated blog post coming out on gamification).

Bedrock’s AI-powered recommendation engine also keeps users engaged by ensuring that every time they log in, they are presented with content that they are interested in and that will help them meet their goals. Most e-learning platforms leave finding what to learn and what order to learn it in up to the user without guidance, but we have taken the best recommendation lessons from platforms such as Netflix, mixed it with our competency framework, and applied it here.

Challenge C: Diverse Forms of Learning Content

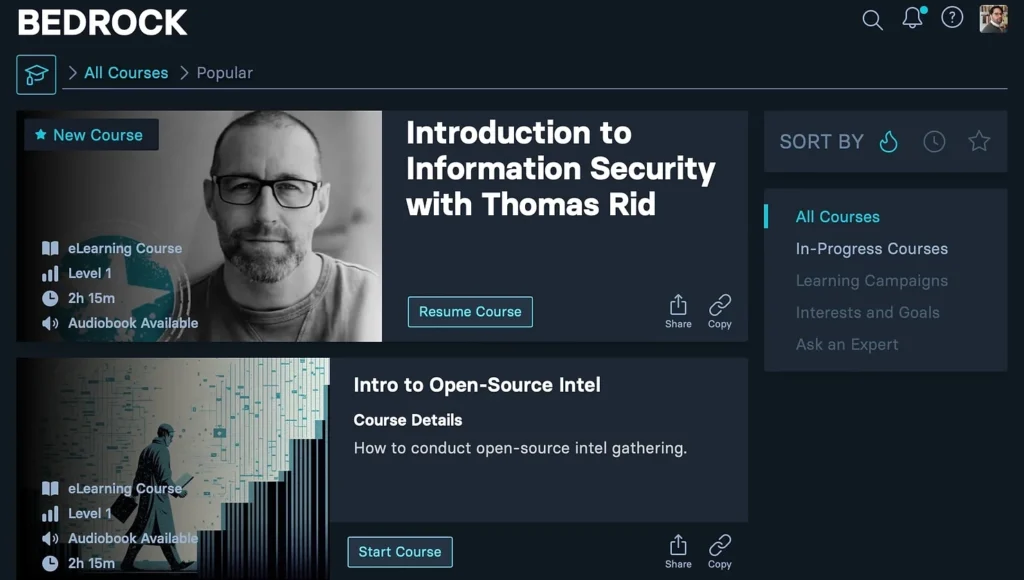

Bedrock supplies courses, games, discussions, and intelligence feeds. All of these have desired learning outcomes and tie into our broader competency model, but their delivery mechanisms couldn’t be more different.

Thankfully, the browser and JavaScript ecosystems have modernized rapidly over recent years, and with the retirement of Internet Explorer in 2022, all major modern browsers now share a much more complete baseline of compatibility across not just scripting, but also presentation, markup, performance, and even advanced technologies such as WebGL(which allows us to render complex 3D scenes efficiently) and Progressive Web Apps (PWAs), which let us move beyond the browser sandbox in subtle but important ways. For example, on mobile phones, we can load a podcast-style audio playlist right from within a web app, so that users can navigate an audio course from their phone’s lock screen, among other things.

For this reason, we chose to embrace the web as a platform for dynamic learning content and deliver all our content as 100 percent web-native, JavaScript- and TypeScript-based, and lightweight. This has proven to be a winning strategy, but it meant grappling with some decisions along the way in terms of how to deliver each type of content in the best, most web-native way.

Games

There are fewer barriers than ever to delivering web games in-browser and without requiring extensions or cumbersome extra downloads. WebGL support is rock-solid in all desktop and mobile browsers. At Bedrock, we use WebGL canvases to embed our games directly into the flow of web content.

The difference maker for us has been PlayCanvas, a completely web-native game engine. PlayCanvas’s engine uses a combination of high-performance JavaScript JS[ES1] [RB2] and Web Assembly modules to provide a baseline of all the expected functionality of any game engine — 3D meshes, materials, shaders, animations, etc. — that is reliable across all browsers. With this as a base, we have been able to iterate quickly as we build our games, easily testing on mobile and desktop simultaneously as we make tweaks and get feedback in real time.

Another benefit of using the PlayCanvas engine is that as the web evolves, our baseline functionality gets updated along with it, “for free.” For example, PlayCanvas is working to adopt the bleeding edge WebGPU standard, which promises to enhance performance in some very important ways. Bedrock will benefit from this work as soon as it is available, without needing to rebuild our games.

I have a lot more to say about games and how we will be employing generative AI to accelerate game development, but that will be the topic for a future post of its own.

Courses

Thankfully, many commercial online course offerings are finally moving beyond the animation-heavy (and, until recently, often Flash-based) format to a more accessible, HTML-based format. However, the e-learning community is adaptive, and in making that transition, they managed to maintain compatibility with the systems that host this content. Yes, I’m talking about the Sharable Content Object Reference Model (SCORM) (and xAPI, and cmi5, and the other related standards that can collectively be called “courseware delivery”).

Instructional designers have many tools at their disposal to create e-learning courses. In our attempt to disrupt and improve the e-learning ecosystem, it’s important we don’t attempt to “boil the ocean” and lose all the valuable parts as well. Regardless of the medium — be it HTML text and video, interactive JS, or even legacy Flash-based content — all e-learning content shares the need to track learners’ progress through the modules and record the results of their assessments. That’s where xAPI (and SCORM/cmi5) comes in.

The xAPI protocol is clever. It’s a standard for recording learner activity that all major learning tools can interoperate with. That wide adoption alone is enough reason to use it, but where it really shines is in its extensibility. It supports unlimited “vocabularies,” or dialects, that let you extend it for any use case.

The baseline dialects already have everything you need to record learner progression through traditional e-learning: the ability to record events such as “Enrolled,” “Attempted,” “Passed,” and “Failed,” for example. Statements that use these predefined xAPI dialects can be readily shared between disparate systems, and all e-learning systems know what to do with that data. But extending xAPI with custom dialects is the real superpower.

At Bedrock, we combine the traditional xAPI dialect with additional properties to describe things like “Joined Game,” “Started Discussion,” or even much more nuanced events like “Had their answer accepted as the correct answer in the community Q&A forum.” This means that a learner’s record in Bedrock is the union of two datasets: Everything described in the xAPI baseline, plus all the Bedrock-specific events and nuance. This data is all stored in one place, in one schema, with the appropriate metadata to filter, aggregate, analyze, and interoperate with systems both inside and outside Bedrock. Other systems can interoperate with Bedrock data at a baseline level, and with a bit of work (a one-time mapping is usually all it takes), can even be made to understand Bedrock-specific data points as well.

Key Takeaways

- Consolidated learning platforms offer richer experiences without sacrificing interoperability.

- By building a holistic LXP, Bedrock has sidestepped much of the complexity of the ecosystem to produce a platform that is both more effective and simpler than the alternatives.

- Bedrock has taken competency-based learning to a new level.

- A lightweight, web-based delivery enables smooth, integrated delivery of courses, games, and intel.

- We’re able to package it beautifully, with a great user experience that guides learners toward mastery without getting in the way.